A short glossary of human irreplaceability

Postcard #42

Hello and welcome back to another edition of THE POSTCARD, Unregistered’s fortnightly roundup of recommendations.

Thoughts, tools, and treats

AI is a great opportunity to more precisely define what makes humans unique, in other words, to reflect on „the element of distance between what metrics give you and what you need to make a decision,“ as Hollis Robbins phrased it in her essay on „the last mile.“ So, instead of yet another lexicon of AI lingo, here’s a small glossary of terms that attempt to capture human singularity.

mētis

Many concepts and intellectual frameworks in today’s AI debate can be traced back to the history of ideas. These include judgment (Kant), the rule-following paradox (Wittgenstein), and the concept of mētis – a knowledge that enables humans to navigate the intricacies of specific situations and adapt to ever-changing circumstances, resisting standardization and therefore digitalization.

Tacit knowledge

The notion of tacit knowledge, put forward by the philosopher of science Michael Polanyi, points in a similar direction. Polanyi famously claimed, “that we can know more than we can tell,“ for instance, when an apprentice learns practical, implicit, even inarticulable knowledge by observing the master. Chris Walker concludes: „If we take Polanyi seriously, and I think we should, then we can make a precise claim about AI’s limits: if we cannot write down everything we know, then AI cannot learn everything we know.“

Process knowledge

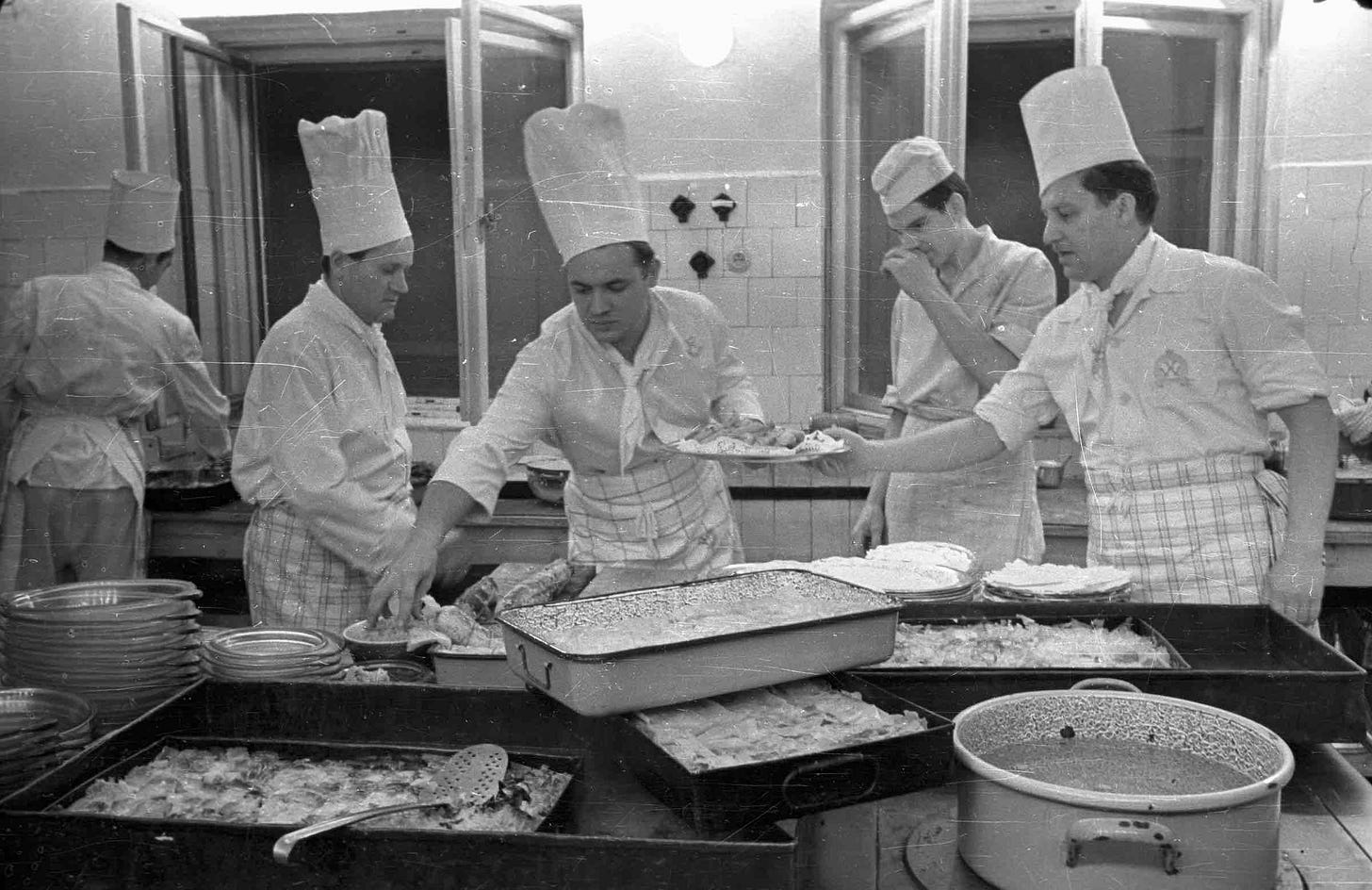

Similarly, Dan Wang makes the case for experience and expertise that algorithms can’t capture, „all the things that come with learning-by-doing“ and calls it process knowledge: „Process knowledge is the kind of knowledge that’s hard to write down as an instruction. You can give someone a well-equipped kitchen and an extraordinarily detailed recipe, but unless he already has some cooking experience, we shouldn’t expect him to prepare a great dish.“

Local knowledge/context

In his latest article, Chris Walker revitalized Hayek’s classic argument against central planning, which claims that crucial knowledge is local and embedded in people’s practices. Walker argues that even if AI will someday be capable of digitizing contextual knowledge, „investing in codification quality upfront, maintaining it as domains evolve, and curating the right codified knowledge for each task“ will require „context engineering,“ which he defines as „experienced judgment about what matters, applied to an environment where AI does the processing.“ The whole piece is eye-opening: „The AI did the processing. The context engineering is what made the processing valuable.“

Taste

Another concept from the history of ideas gaining new prominence in the context of AI is „taste.“ E.g., last week Anthropic co-founder Jack Clark told Ezra Klein that „the thing that is increasingly limited, or the thing that’s going to be the slowest part is having good taste and intuitions about what to do next. Developing and maintaining that taste is going to be the hard thing. Because as you’ve said, taste comes from experience, it comes from reading the primary source material, doing some of this work yourself.“ To dig deeper, here’s a collection of classic quotes on the topic, and here’s a practical guide featuring literature as an example.

Noteworthy

“I wonder how many of the people making predictions about the future of truck drivers have ever ridden with one to see what they do? (...) These people have local knowledge that is not easily transferable. They know the quirks of the routes, they have relationships with customers, they learn how best to navigate through certain areas, they understand how to optimize by splitting loads or arranging for return loads at their destination, etc. They also learn which customers pay promptly, which ones provide their loads in a way that’s easy to get on the truck, which ones generally have their paperwork in order, etc. Loading docks are not all equal. Some are very ad-hoc and require serious judgement to be able to manoever large trucks around them. Never underestimate the importance of local knowledge.“

A mystery link leading into the unknown

Fuel To...

As always,

Dirk

P.S.: Feel free to send me pointers to articles, books, sites, pods, tools, and treats that could be interesting for this roundup. While I cannot promise to link them, I read and appreciate every hint.

Yes this is exactly the conversation and set of refinements I hoped would blossom after "last mile." Human knowledge is rich varied, and outside what LLMs will reach (though I expect they'll get closer and closer).

I’ve seen a lot of versions of this “tact knowledge” argument against AI and I think it’s a bad argument. It would hold against “logist” or “symbolic AI” which is constructed from axioms and inferences from axioms. But I don’t see how it holds against the “connectionist” AI that is behind the current AI boom. The whole point of machine learning is that it is able to pick up patterns from a data set without having to be explicitly taught. It’s a lot more flexible and able to pick up context-dependent cues. It’s not taught on explicit rules like traditional (GOFAI) AI, rather it picks up patterns from large amounts of data. Think of how LLM learned how to use language: again, not by learning all the rules of language, but by getting exposed to a vast data base of language-use and observing statistical regularities. This isn’t exactly like human tact knowledge, but it’s similar enough to make the argument unconvincing. Granted, we are still talking about disembodied AIs—they aren’t learning in an embodied way like human beings. However, a lot of the arguments that were valid against Symbolic AI, don’t hold with the Connectionist AI. One argument that I think is still valid is the fact that human intelligence is intentional and conscious. AIs are following a mechanical and mathematical process and are not aware of what they are doing. This is why they keep hallucinating.